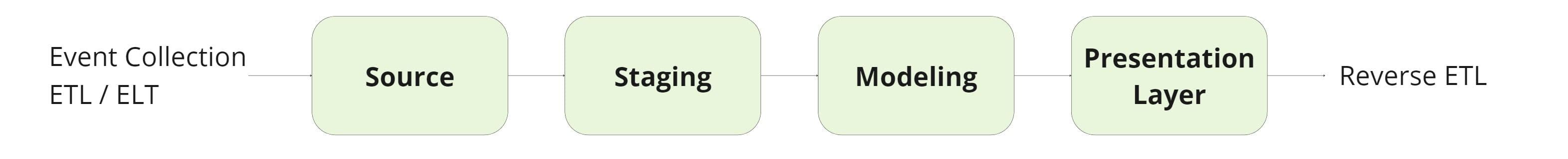

Data engineering has come a long way in the last few years, yet the quest for building robust and agile data teams is ongoing. Implementing data warehouse layers has emerged as a popular and effective method to organize the flow of data from ETL to Reverse ETL and serve as a proxy for data maturity.

Critics would be valid to argue that adding additional warehouse layers can introduce unnecessary abstraction. However, in this blog post, I will showcase how you can leverage data warehouse layers in a way that avoids excessive complexity to power your downstream use cases.

What are Data Warehouse Layers?

Data warehouse layers are a structured approach to managing your organization’s data flow. A layered data architecture aims to ingest and transform all of your customer and business data so it is readily available and usable by your business and marketing teams for analytics and activation.

This structured approach to data warehousing gives you more visibility and control over your data flows and introduces structure and organization so you can more easily manage your data and scale layers as needed.

The Complete Guide to Reverse ETL

Read our whitepaper to learn where Reverse ETL fits in the modern data stack.

Types of Data Layers

In layered data modeling, each layer builds on the previous one, starting with ingesting raw data and resulting in a final view containing clean transformed data that is highly functional. There are only four types of data warehouse layers, each serving a slightly different purpose.

The Source Layer

Your data sources are the starting point for your entire data stack, where all your data is generated. Your data sources act as the foundation for your raw data, serving as an “identity property” layer with minimal transformation. Aside from basic naming changes, no actions are taken on this data. Your source layer can even live outside your data warehouse.

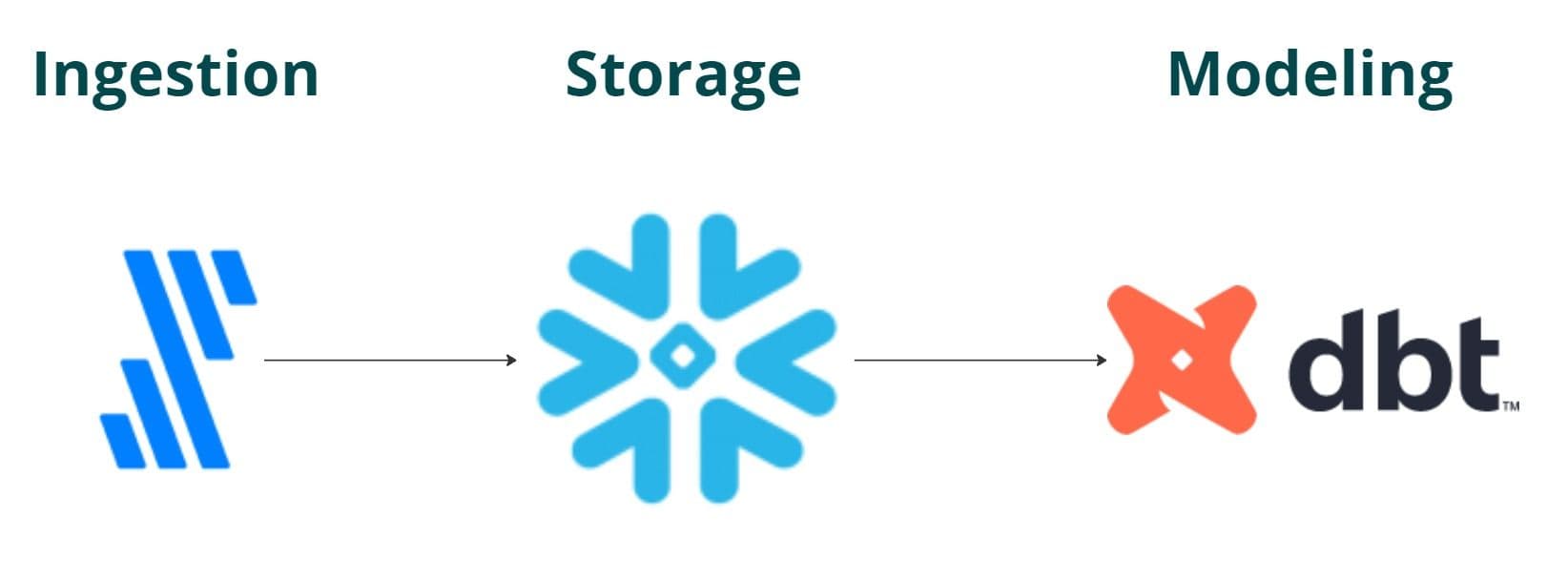

At Hightouch, setting up data layers involves leveraging our partner ecosystem. For the source layer in particular, we use Fivetran to ingest the data and Snowflake to store it before locking it down via dbt models. The flow looks something like this:

The Staging Layer

The staging layer acts as a buffer zone between your source and modeling layers, and it’s where you store and centralize all of your data before it’s transformed. Only light data transformations occur in this stage, like filtering, validation, or normalization. The purpose of the staging layer is to help you consolidate your data so you can build models to power your business.

With ETL processes, the staging layer is outside your data warehouse, and with ELT, the staging layer is inside your data warehouse. The key takeaway here is that the staging layer owns non-business-logic transformations to keep the modeling layer clean.

The Modeling Layer

The modeling layer is the “intermediate” or “silver” layer responsible for producing common building blocks and providing a working space to address your downstream use cases. The purpose of this layer is to transform and merge your data into coherent models that answer important questions unique to your business.

This layer “soaks up complexity” specific to business logic and activation needs. Ideally, models built here can be reused effectively across multiple use cases and business units. A simple example would be a model for Customer Lifetime Value, which aggregates and calculates the lifetime value of customers based on purchase history.

Column names could be first_purchase_date, total_purchases, total revenue, average, or even churn propensity. Mature data teams spend time focusing on analyzing, enriching, and providing flexible building blocks to use downstream.

The Presentation Layer

The presentation layer acts as the serving layer designed for reporting and activation. There is a high degree of attention here as this is the final layer in the acyclic graph. The tables in this layer are structured to optimize query performance. The end goal in this layer is to create curated datasets that can serve end users and business teams.

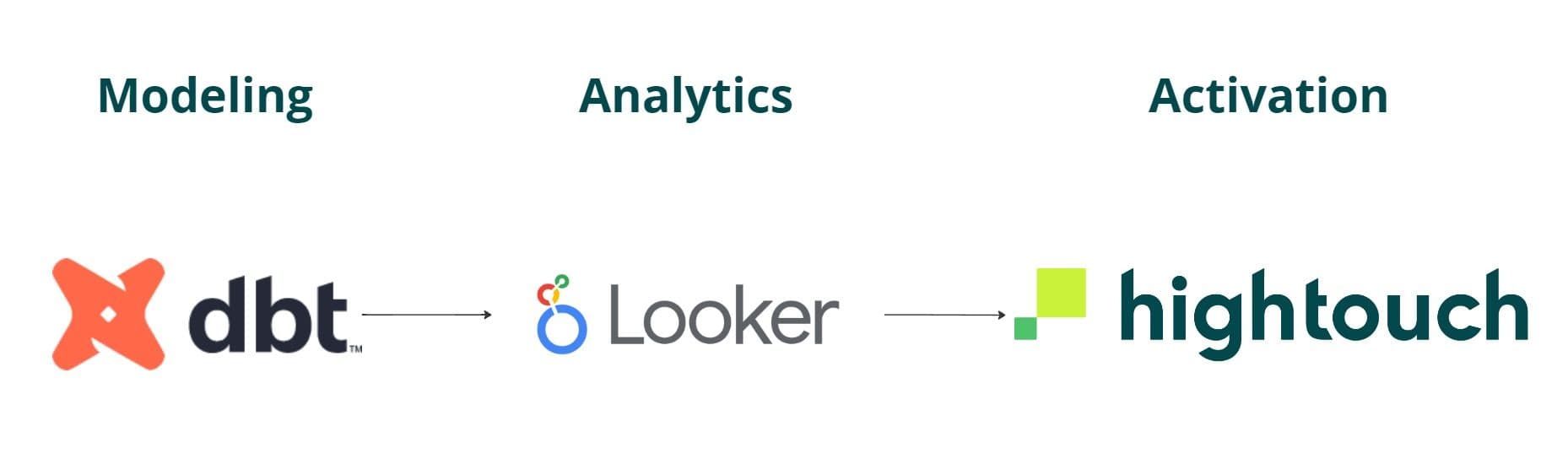

Building off our Hightouch example, we continue leveraging our partner data ecosystem to power our data lifecycle before finally activating it.

We use this layer to serve our success, sales, marketing, operations, and finance teams. There’s a lot of value to be derived from just interacting at the presentation layer. More importantly, by simply targeting the right layer of abstraction, data teams are better equipped to simplify what parts of their data flow should be exposed in a self-serve way to non-technical users.

Avoiding Unnecessary Abstraction

While critics may argue that the data warehouse layers introduce unneeded abstraction, it is essential to understand that these layers serve as virtual categories rather than strict, physical divisions. Data teams can loosely map their data flow to these layers, allowing for flexibility and adaptability.

By doing so, they reap the benefits of an organized workflow that is flexible and robust, all without succumbing to excessive complexity. However, it’s important to strike a balance between abstraction and practical implementation. That is, your data warehouse layers should serve as a guide rather than a rigid structure that stifles innovation and creativity.

At this point, it’s also worth noting that the paradigm of creating structure around data architecture is nothing new. In fact, the idea of layered architecture emerged in the 1980s and 1990s as organizations recognized the importance of structuring and organizing their data to enable effective analytics and decision-making.

Moreover, you may have heard the same idea expressed differently over the years. For example, the bronze, silver, and gold layers solve the same need to refine and categorize data as it moves through a pipeline.

The bronze layer aligns with the source layer, representing raw and unprocessed data. The silver layer, also known as the modeling layer, encapsulates business logic and creates reusable models for multiple use cases. Lastly, the gold layer corresponds to the presentation layer, focusing on activation, presentation, and reporting. Over the years, these layers may change names and may continue to morph, but the key takeaway remains intact: *structure helps teams that manage their data thoughtfully.

Best Practices for Embracing Data Warehouse Layers

At face value, implementing data warehouse layers is relatively simple. However, you can implement several best practices to optimize your architecture.

- Focus on flexibility: Remember that the data warehouse layers are virtual categories. Adapt them to your team's unique needs. Grouping models and organizing your data flow according to these layers will provide clarity and structure without rigid constraints.

- Maintain traceability: To ensure that your data flow remains transparent and easily accessible, maintain a history that connects the presentation layer back to the source layer. This will help you avoid unnecessary clutter and versioning of tables in the final layer.

- Keep security and scrutiny in mind: Pay extra attention to the presentation layer, where data is used by your end customers. Implement strict security measures and maintain high standards for data quality in this layer to protect your data ecosystem and your customers' trust.

Why Data Layers Matter

The data warehouse layers provide a solid foundation for organizing your data flow from ETL to Reverse ETL, without introducing unnecessary abstraction. This will ensure your data teams:

- Avoid redundant work

- Easily manage data dependencies (with tools like dbt)

- Focus on data security and finalization at the presentation layer

- Visualize and act strategically on their data ecosystem (e.g., identify bottlenecks or sources of complexity)

With a strong presentational layer, Data Activation platforms play a complementary and vital role in streamlining the data flow process to your end users by significantly reducing manual labor, improving data accuracy, and allowing your team to focus on more strategic and creative tasks (like rewind campaigns.)

By embracing this paradigm with flexibility and a focus on security, traceability, and the efficient use of reverse ETL solutions, you can iterate quickly and take your data operations to new heights.